The state of confusion can be described as standing at the crossroads. "Should I choose this path or that?"

How is ChatGPT confusion different from hallucination?

Hallucination is making up data by itself when it encounters unavailability while confusion lies in determining which set of data to consult. In our ChatGPT-based application ExpertGuru, we have incorporated diverse types and quantities of data to fulfill our business requirements. Consequently, situations may arise where contextual elements align with multiple references, leading the application into a perplexing state, grappling with the decision of which path to pursue.

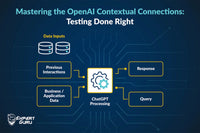

The underlying reason for the existence of confusion is data and its reference. The types of data are:

1. Business dataAs mentioned, data supplies are of diverse types and quantities. Some are as below:

The data reading here involves the connection of data. Within a row, there is interconnected information and the significance of the data stems from the corresponding column labels.

In the above table, we can see that there are multiple references for corn chips, I1, etc., and a sample framed question could be “Show me the batch details for Product ID I1” and the response could be as below:

The response includes I12 and I13, which is not true/accurate when the search is for I1.

Testing Insights

- The tester should know about the type of data and should be formulating the test cases, discerning which values require sub-character checks and which values should be an exact match.

- The tester should consider interconnected data test cases, Ex. “Give me the product ID and batch number for the inventory manufactured in the year 2024.”

- It should also be checked for mathematical operations that can be applied, Ex. Number field columns can have queries like “Give me all the data whose batch number is greater than 34.,” “Give me all the data whose date of expiry is year 2025.”

Document format data

We can have various policies, descriptions, about, FAQs, etc. types of data integrated into our application.

Ex. In a school application encompassing aspects such as children's admissions, staff, primary and secondary teachers, office personnel, etc., each category has distinct eligibility criteria, including factors like age, domain, leave policies, etc. When faced with queries like "What is the marriage leave policy for teachers?" it becomes essential to determine whether the application should refer to the policy applicable to primary or secondary teachers.

Testing insights

The tester should craft test cases by evaluating matching data across two or more documents. When a query involves common data, it is essential to ascertain if all relevant cases are appropriately addressed. Additionally, when historical relevance is in play, it is crucial to confirm that the data is referenced from the correct document.

2. History-based data

Our bot, in responding to the current query, not only consults the data set but also seamlessly integrates information from the chat/session history. This thoughtful consideration allows the bot to tailor its responses accordingly. For instance, in the context of a women's clothing store, if previous discussions revolved around night dresses with a size mention of XL, when presented with a query like "Show me some partywear dresses," the expectation is for the bot to refer back and recommend dresses in the same size of XL. Now, the references to the query are both data set as well as history. At times, the priority shifts from the current query to history, resulting in responses solely based on historical interactions and the current query is not even answered. Moreover, on occasions when the bot encounters history-based questions, it tends to initiate the response with "Apologies for the confusion..." followed by the actual reply.

Testing Insights

The tester should make test data history and frame queries which are not enough (like “Show me something in blue also,” “Show me more,” “Drop all the yellow and purple options,” etc.) but when reference from the chat history is taken, better results should be there.

Furthermore, an improvement lies in elevating the user experience through enhanced results when the chat history is available. This smoother experience ensures that there should be no need for the user to enter the same and repeated common information again and again. Ex. Favourite colour and size were part of earlier interactions, so these should be considered for the current query as well.

It is very essential for the tester to validate that this response is an apt one to answer the current query.

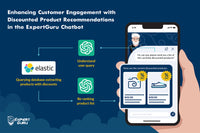

3. Biased data

Data may exhibit bias contingent on factors such as events, occasions, historical context, and prompt data. It is imperative to validate whether the present query receives an appropriate response, avoiding instances of excessive or insufficient bias. Instances have been noted where events or historical context are overly emphasized in crafting responses, diminishing the relevance of the current query. Likewise, testing should also be ensured at the time of injected biasing like during events, festivals, sales, etc. to yield correspondingly appropriate results. The application should adhere rigorously to prompt rules.

Ex. If we bias the system for Christmas, then Christmas theme outfits or red should be given more priority.

Testing Insights

The tester should read prompts, consider biasing context like occasions and history, and make queries on these. The results of these test cases may manifest as either positive or negative. In the positive scenario, adherence to prompt rules and apt responses to occasion or history-based queries is confirmed. On the contrary, negative biasing instances may arise where the context, history, or occasion is accorded undue significance at the expense of addressing the current query.

Ex. Positive: Prompts have the context to not give brand names in response. “Suggest to me some online partywear brands.” The response is a graceful rejection of brand information.

Ex. Positive: History has the context of the user’s favourite colour red. “Show me some night dresses.” The response is around the user’s favourite colour red; also, if not available, it gracefully shows other colours saying, “It’s not red but you might like it....”

Ex. Negative: The occasion is Christmas. “Show me some blue outfits for men.” The response is around the Christmas theme and red in colour. Occasion consideration is given more importance than the current query.

Ex. Negative: History has the context of the user’s favourite colour red. “Show me some night dresses in blue.” The response is around the user’s favourite colour red. History consideration is given more importance than the current query.

Challenges from our application ExpertGuru

1. In our responses, we encounter textual elements such as "I apologize for the confusion..." followed by a textual response addressing the current query.

2. Occasionally, certain portions of text responses are from the chat history while the rest is according to the current query. Uneven nonsense response is received.

3. Sometimes a complete response is based on previous chat history and the current query isn’t even answered.

4. It was seen that our bot rejected some products in the past which were not available and keeps on rejecting products in further conversations as well even when the product is available.

5. Given the consideration of historical relevance, if our bot encounters a failure in one instance, it tends to persist in subsequent queries, creating a recurring issue.

6. Occasionally, queries related to orders were redirected to policy documents instead of the user's order database and vice versa.

7. Our bot encounters confusion when the historical context is disjointed, such as a shift in the query category. For instance, a query initially focused on children in history. The context shifted abruptly to women, leading to perplexity in the bot's responses.

Conclusion

The tester should meticulously examine prompt comprehension and various forms of data input to the application and then craft test cases based on these considerations. It is imperative to ensure thorough validation of responses. The developer/prompt engineer should be apprised of the scenarios and the tester's adherence to such practices can aid in mitigating ambiguities, minimizing multiple interpretations, addressing biases, and recognizing model limitations.